Building dictionaries

Some of you have been brave enough to start to write new language pairs for Apertium. That makes me (and all of the Apertium troop) very happy and thankful, but more importantly, it makes Apertium useful to more people.

I want to share some lessons I have learned after building some dictionaries: the importance of frequency estimates. For the new pairs to have the best possible coverage with a minimum of effort, it is very important to add words and rules in decreasing frequency, starting with the most frequent words and phenomena.

The reason that words should be added in order of frequency is quite intuitive: the higher the frequency, the more likely the word is to appear in the text you are trying to translate (see below for Zipf's law).

For example, in English you can almost be sure that the words "the" or "a" will appear in all but the most basic sentences; however, how many times have you seen "hypothyroidism" or "obelisk" written? The higher the frequency of the word, the more you "gain" from adding it.

Frequency[edit]

A person's intuition on which words are important or frequent can be very deceptive. Therefore, the best one can do is collect a lot of text (millions of words, if possible) which is representative of what one wants to translate, and study the frequencies of words and phenomena. Get it from Wikipedia or from a newspaper archive, or write a robot that harvests it from the Web.

It is quite easy to make a crude "hit parade" of words using a simple Unix command sequence (a single line):

$ cat mybigrepresentative.txt | tr ' ' '\012' | sort -f | uniq -c | sort -nr > hitparade.txt

[I took this from Unix for Poets, I think. More info on making frequency lists at Make a frequency list.)

Of course, this may be improved a lot but serves for illustration purposes.

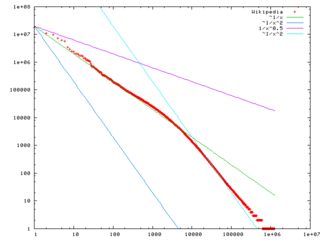

You will find interesting properties in this list. One is that in multiplying the rank of a word by its frequency, you get a number which is pretty constant. That's called Zipf's Law.

Another one is that half of the list are hapax legomena (words that appear only once).

Third, with about 1,000 words you may have 75% of the text covered.

So use lists like these when you are building dictionaries.

If one of your languages is English, there are interesting lists:

Bear in mind, of course, that these lists are also based on a particular usage model of English, which is not "naturally occurring" English.

The same applies for other linguistic phenomena. Linguists tend to focus on very infrequent phenomena which are key to the identity of a language, or on what is different between languages. But these "jewels" are usually not the "building blocks" you would use to build translation rules. So do not get carried away. Trust only frequencies and lots of real text.

Sorting part of a bidix by frequency[edit]

Say you've created your hitparade, here's a way of sorting part of a bidix (e.g. all nouns) by frequency.

First, extract the part you want to sort into a file of its own, e.g. /tmp/nouns like

<e> <p><l>potetlauv<s n="n"/><s n="nt"/></l><r>potetløv<s n="n"/><s n="nt"/></r></p></e> <e r="RL"><p><l>potetlauv<s n="n"/><s n="nt"/></l><r>potetlauv<s n="n"/><s n="nt"/></r></p></e> <e> <p><l>haustlauv<s n="n"/><s n="nt"/></l><r>høstløv<s n="n"/><s n="nt"/></r></p></e> <e> <p><l>klauvdyr<s n="n"/><s n="nt"/></l><r>klovdyr<s n="n"/><s n="nt"/></r></p></e>

You'll probably want to keep all nouns in one place, so do this once per part-of-speech. Now, if you've also created a file /tmp/hitparade.txt as described above, run the following script in your terminal:

</tmp/hitparade.txt sed 's/^ *\([0-9][0-9]*\) */\1\t/' | awk -F'\t' -vside="l" -ventries=/tmp/nouns '

BEGIN{

while(getline<entries){

lm=$0; sub(".*<"side">","",lm);sub(/<s .*/,"",lm);gsub("<[bj]/>"," ",lm);gsub("</*[ag]/*>","",lm)

if(lm in lines) lines[lm]=lines[lm]"\n"

lines[lm]=lines[lm]$0

}

}

$2 in lines {

print lines[$2]

lines[$2]=0

}

END{for(lm in lines){ if(lines[lm]) print lines[lm] }}'

' >/tmp/nouns.freqsorted

Remember to replace /tmp/hitparade.txt and /tmp/nouns with whatever file names you used, and replace -vside="l" with -vside="r" if you want to sort by <r> instead of <l>.

Now you can replace the lines in bidix which you cut out, with the contents of /tmp/nouns.freqsorted.

Getting corpora[edit]

Corpus catcher[edit]

Wikipedia dumps[edit]

For help in processing them, see:

The dumps need cleaning up (removing Wiki syntax and XML etc.), but can provide a substantial amount of text — both for frequency analysis and as a source of sentences for POS tagger training. It can take some work, and isn't as easy as getting a nice corpus, but on the other hand they're available in some 275 languages with at least 100 articles written in each.

You'll want the one entitled "Articles, templates, image descriptions, and primary meta-pages. -- This contains current versions of article content, and is the archive most mirror sites will probably want."

Use one of the methods on Wikipedia dumps to clean your corpus.

Or a simple shell script like (for Afrikaans):

$ bzcat afwiki-20070508-pages-articles.xml.bz2 | grep '^[A-Z]' | sed 's/$/\n/g' | sed 's/\[\[.*|//g' | sed 's/\]\]//g' | sed 's/\[\[//g' | sed 's/&[a-z]*;/ /g'

or more simple :

$ bzcat afwiki-20070508-pages-articles.xml.bz2 | grep '^[A-Z]' | tr -d "[]" |

sed 's/|/ /g

s/&[a-z]*;/ /g'

Bech 18:41, 6 February 2012 (UTC)

This will give you approximately useful lists of one sentence per line (stripping out most of the extraneous formatting). Note, this presumes that your language uses the Latin alphabet; if it uses another writing system, you'll need to change that.

Try something like (for Afrikaans):

$ bzcat afwiki-20070508-pages-articles.xml.bz2 | grep '^[A-Z]' | sed 's/$/\n/g' | sed 's/\[\[.*|//g' | sed 's/\]\]//g' | sed 's/\[\[//g' | sed 's/&[a-z]*;/ /g' | tr ' ' '\012' | sort -f | uniq -c | sort -nr > hitparade.txt

or more simple :

$ bzcat afwiki-20070508-pages-articles.xml.bz2 | grep '^[A-Z]' | tr -d "[]" |

sed 's/|/ /g

s/&[a-z]*;/ /g

s/ /\n/g' | sort -f | uniq -c | sort -nr > hitparade.txt

Bech 18:41, 6 February 2012 (UTC)

Once you have this 'hitparade' of words, it is first probably best to skim off the top 20,000–30,000 into a separate file.

$ cat hitparade.txt | head -20000 > top.lista.20000.txt

Now, if you already have been working on a dictionary, chances are that there will exist in this 'top list' words you have already added. You can remove word forms you are already able to analyse using (for example Afrikaans):

$ cat top.lista.20000.txt | apertium-destxt | lt-proc af-en.automorf.bin | apertium-retxt | grep '\/\*' > words_to_be_added.txt

(here lt-proc af-en.automorf.bin will analyse the input stream of Afrikaans words and put an asterisk * on those it doesn't recognise)

For every 10 words or so you add, it's probably worth going back and repeating this step, especially for highly inflected languages — as one lemma can produce many word forms, and the wordlist is not lemmatised.

Generating monolingual dictionary entries[edit]

- Main article: Monodix

Cheap tricks and clever hacks to generate monodix entries:

- Run Extract

- Maybe use your existing monodix to constrain the possible pardefs, see Improved corpus-based paradigm matching

- See more ideas at Speeding up monodix creation

- Or follow the below procedure

Simple corpus-based paradigm-matching[edit]

If the language you're working with is fairly regular, and noun inflection is quite easy (for example English or Afrikaans), then the following script may be useful:

You'll need a large wordlist (of all forms, not just lemmata) and some existing paradigms. It works by first taking all singular forms out of the list, then looking for plural forms, then printing out those which have both singular and plural forms in Apertium format.

Note: These will need to be checked, as no language except Esperanto is that regular.

# set this to the location of your wordlist

WORDLIST=/home/spectre/corpora/afrikaans-meester-utf8.txt

# set the paradigm, and the singular and plural endings.

PARADIGM=sa/ak__n

SINGULAR=aak

PLURAL=ake

# set this to the number of characters that need to be kept from the singular form.

# e.g. [0:-1] means 'cut off one character', [0:-2] means 'cut off two characters' etc.

ECHAR=`echo -n $SINGULAR | python -c 'import sys; print sys.stdin.read().decode("utf8")[0:-1];'

PLURALS=`cat $WORDLIST | grep $PLURAL$`

SINGULARS=`cat $WORDLIST | grep $SINGULAR$`

CROSSOVER=""

for word in $PLURALS; do

SFORM=`echo $word | sed "s/$PLURAL/$SINGULAR/g"`

cat $WORDLIST | grep ^$SFORM$ > /dev/null

# if the form is found then append it to the list

if [ $? -eq 0 ]; then

CROSSOVER=$CROSSOVER" "$SFORM

fi

done

# print out the list

for pair in $CROSSOVER; do

echo ' <e lm="'$pair'"><i>'`echo $pair | sed "s/$SINGULAR/$ECHAR/g"`'</i><par n="'$PARADIGM'"/></e>';

done

Generating bilingual dictionary entries[edit]

Cheap tricks and clever hacks for expanding bilingual dictionaries:

- Cross two language pairs with crossdics – turn A-B and B-C into A-C

- Bilingual dictionary discovery for more complicated Crossdics-like pairings

- Make a TMX with bitextor

- Grab some translations from Wikidata

- Run ReTraTos on word alignments if you have a parallel corpus

- or just Giza and look through the symmetrised dictionary created; see Extracting bilingual dictionaries with Giza++

- or just Anymalign / fast_align and look through the generated word alignments

- Spell a source language frequency list using a target-language spell checker

- maybe run a transliterator first

- maybe filter candidates by occurrence in an aligned sentence

- maybe run some regexes on it first to hardcode some subword correspondences

- or even create a full-blown sub-word translator using the regex/FST system of your choice

- Decompound words and run through your existing bidix

- See Automatically generating compound bidix entries for one approach, scoring with an LM

- Maybe decompound your bidix as well (requires an analyser that can give compound analyses even if full analyses exist) and use that to create a bigger compound-part-bidix to run the above procedure on

- Cluster compound-parts to find translations of parts through compounds with other parts

- Say you have big monolingual dictionaries and want to expand the bidix. E.g. the sme word bearaš is missing in sme-nob, but it appears as a prefix in lots of monolingual compounds, e.g. bearašviessu, bearašásodat. Many of the _suffixes_ are in the bidix. So look up these suffixes, and find which nob-words take the translations of the suffixes. The ones that take the most of these suffix-translations are likely candidates for a prefix-translation.

- Generate possible Multiword Compounds from existing multiword compounds, check for them in corpus

- as done in http://www.mt-archive.info/10/MTS-2013-Sato.pdf

- if your bidix has mwe's "A X", "B X" and "A Y", then a candidate mwe is "A X"

- Find Repeated Words within two aligned paragraphs – these are possible translation terms

- as done in http://www.mt-archive.info/RANLP-2005-Giguet.pdf

- lemmatise first

- Find Inline Bitext, terms translated into the target language occurring in a source-language text, by using word-level language identification

- Use Wiktionary (see below)

- Run word_align to align words using the tetragrams of a small bilingual corpus ("word_align and char_align were designed to work robustly on texts that are smaller and more noisy than the Hansards")

Ways to filter out good candidates, in general:

- Corpus frequency – always start at the top

- Occurrence in aligned sentences – these are typically good

- maybe lemmatise corpus first, or look for word that's edit-distance<=2 from source-side of candidate word

- Frequency similarity – source and target words should have "similar" normalised frequencies

- Generated word has a monolingual analysis – assuming your monodix is bigger than your bidix

Getting bilingual dictionary entries from Wiktionary[edit]

A cheap way of getting bilingual dictionary entries between a pair of languages is as follows:

First grab yourself a wordlist of nouns in language x; for example, grab them out of the Apertium dictionary you are using:

$ cat <monolingual dictionary> | grep '<i>' | grep '__n\"' | awk -F'"' '{print $2}'

Next, write a basic script, something like:

#!/bin/sh

#language to translate from

LANGF=$2

#language to translate to

LANGT=$3

#filename of wordlist

LIST=$1

for LWORD in `cat $LIST`; do

TEXT=`wget -q http://$LANGF.wikipedia.org/wiki/$LWORD -O - | grep 'interwiki-'$LANGT`;

if [ $? -eq '0' ]; then

RWORD=`echo $TEXT |

cut -f4 -d'"' | cut -f5 -d'/' |

python -c 'import urllib, sys; print urllib.unquote(sys.stdin.read());' |

sed 's/(\w*)//g'`;

echo '<e><p><l>'$LWORD'<s n="n"/></l><r>'$RWORD'<s n="n"/></r></p></e>';

fi;

sleep 8;

done

Note: The "sleep 8" is so that we don't put undue strain on the Wikimedia servers.

If you save this as iw-word.sh, then you can use it at the command line:

$ sh iw-word.sh <wordlist> <language code from> <language code to>

For example, to retrieve a bilingual wordlist from English to Afrikaans, use:

$ sh iw-word.sh en-af.wordlist en af

The method is of variable reliability. Reports of between 70% and 80% accuracy are common. It is best for unambiguous terms, but works all right where terms retain ambiguity through languages.

Any correspondences produced by this method must be checked by native or fluent speakers of the language pairs in question.

Further reading[edit]

- Mark Pagel, Quentin D. Atkinson & Andrew Meade (2007) "Frequency of word-use predicts rates of lexical evolution throughout Indo-European history". Nature 449, 665

- "Across all 200 meanings, frequently used words evolve at slower rates and infrequently used words evolve more rapidly. This relationship holds separately and identically across parts of speech for each of the four language corpora, and accounts for approximately 50% of the variation in historical rates of lexical replacement. We propose that the frequency with which specific words are used in everyday language exerts a general and law-like influence on their rates of evolution."