User:GD/proposal

Contents

- 1 Contact information

- 2 Am I good enough?

- 3 Why is it I am interested in machine translation? Why is it that I am interested in Apertium?

- 4 Which of the published tasks am I interested in? What do I plan to do?

- 5 Proposal

Contact information[edit]

Name: Evgenii Glazunov

Location: Moscow, Russia

University: NRU HSE, Moscow (National Research University Higher School of Economics), 3rd-year student

E-mail: glaz.dikobraz@gmail.com

IRC: G_D

Timezone: UTC+3

Github: https://github.com/dkbrz

Am I good enough?[edit]

Education: Bachelor's Degree in Fundamental and Computational Linguistics (2015-2019) at NRU HSE

Courses:

- Programming (Python, R, Flask, HTML, xml, Machine Learning)

- Morphology, Syntax, Semantics, Typology/Language Diversity

- Mathematics (Discrete Mathematics, Linear Algebra and Calculus, Probability Theory, Mathematical Statistics, Computability and Complexity, Logic, Graphs and Topology, Theory of Algorithms)

- Latin, Latin in modern Linguistics, Ancient Literature

Languages: Russian (native), English (academic), French(A2-B1), Latin (a bit), German (A1)

Personal qualities: responsibility, punctuality, being hard-working, passion for programming, perseverance, resistance to stress

Why is it I am interested in machine translation? Why is it that I am interested in Apertium?[edit]

The speed of information circulation does not allow to spend time on human translation. I am truly interested in formal methods and models because they represent the way any language is constructed (as I see it). Despite some exceptions, in general language is very logical and the main problem is how to find proper systematic description. Apertium is a powerful platform that allows to build impressive rule-based engines. I think rule-based translation very promising if we provide enough data and an effective analysis

Which of the published tasks am I interested in? What do I plan to do?[edit]

I would like to work on Bilingual dictionary enrichment via graph completion

The main idea is to take a graph representation of dictionaries and create tools to work on translation via edges between words in this graph. Graphs are very hard to work on because the complexity of calculations is high. But there are some tools and libraries that are created specially for these purposes and are effective. The developer task is to apply these instruments to specific type of dictionary information.

I worked with NetworkX as it is fully available for my current Windows, but I plan to work with Graph-tool that is much more efficient with large graphs.

List of main ideas:

- Use classes to create the most appropriate type of information

- Work with subraphs (connectivity components) to reduce the complexity of calculations

- Filtration algorithms to gain previous aim

- Vectorization to increase efficiency of all functions

- Developing different metrics to reach quality of translation

- Evaluation of these metrics Word object. Basic elements are lemma, language and POS information. Representation and String format can be modified according to developer needs. This one is like 'EN_first_adj' to check output of functions. One of important class Word: def __init__(self, lemma, lang, pos): self.lemma = lemma self.lang = lang self.pos = pos def __str__(self): return (str(self.lang)+'_'+str(self.lemma)+'_'+str(self.pos)) __repr__ = __str__ def __eq__(self, other): return self.lemma == other.lemma and self.lang == other.lang and self.pos == other.pos def __hash__(self): return hash(str(self))

Filtration Filtration is necessary to filter sets of word by their parameters (in most cases, POS and language).

Subgraphs A general graph consists of lots of connectivity components so while searching we need to take into account only a part of it. It really increases efficiency.

Directed graphs

- take into account LR and RL only cases

- avoid some cycles

- we can use directed in-edges for target language in translation subgraph to define it as a finite state in finite-state machine. So we do not go outside the node because we have already found our translation (a simple path from word to target language word)

The last one is very important because it turned out that there is an endless loop problem that coul be solved by subgraphing but this is unefficient comparing to finite state solution for various resons: big n potential and logically it seems to be more natural.

Vectorization Vectorizing functions and avoiding cycles really affects efficiency.

Metrics It is possibly the most important thing as we need to evaluate variants. The list of possible translation can be long as well as paths that lead to these final nodes. So to choose which one is the best we need to find a formula (or a set of formulae - this is better). And then choose the best one. I think of following algorithm:

- take a general graph without one pair

- run translation for this pair, find variants chosen by all these formulae

- get accuracy comparing with existing translations

So after running on different language pair, we get plenty of data to choose one or a composition.

The result of this work will be a tool that can check dictionaries and find new word-pairs that can be included in bidix. And generate insertions for dictionaries.

See some examples of my ideas in my Python notebook

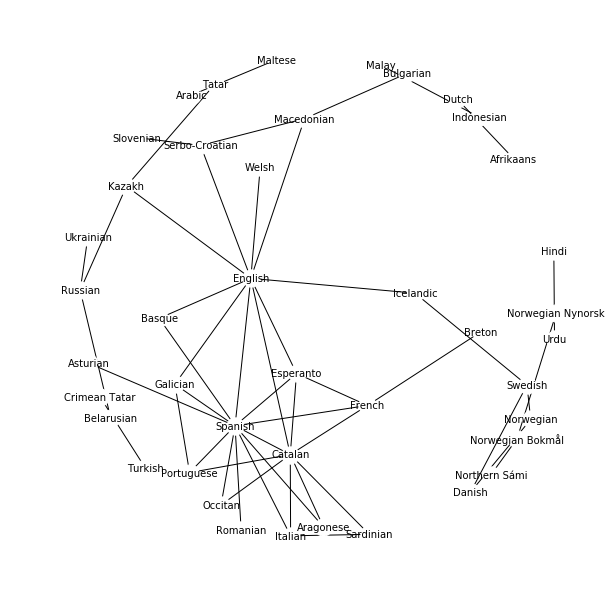

And there is a graph of released language pairs that shows possible ways of translation via other languages:

Proposal[edit]

Why Google and Apertium should sponsor it? How and who it will benefit in society?[edit]

I think there is a lot of math in language and graph representation of dictionaries is an exciting idea, because it adds some kind of cross-validation and internal system source of information. This information help to fill some lacunae that appear while creating a dictionary. This will improve a quality of translation as we manage to expand bidix.

Graph representation is very promising because it represents a philosophical model of a metalanguage knowledge. Knowing several languages, I know that it could be hard to recall some rare word and it is easier to translate from French to English and only then to Russian - because I forgot the word-pair between Russian and French. This graph representation works just like my memory: we cannot recall what is this word from L1 in L2. Hmm, we know L1-L3 and L3-L2. Oh, that's the link we need. Now we know L1-L3 word-pair. So, as we work on natural language processing, let's use natural instruments and systems as well.

The main benefit of this project is reducing human labor and automatization of part of the dictionary development.

- Finding lacunae in created dictionary (what words are missing).

- Dictionary enrichment based on algorithm that offer variants and evaluation of these variants.

- A potential base for creating new pairs.

Coding Challenge[edit]

ipynb with current state of my coding challenge

Week by week work plan[edit]

Post application period[edit]

1. Refreshing and obtainig more specific knowledge about graph theory (during current course and in extra sources)

2. Thinking about statistical approach that can be relevant for this particular task

3. Theoretical research on general algorithmic optimisation

Community bonding period[edit]

1. Discussing my considerations and ideas with mentors

2. Icluding particularities and detail that are relevant

3. Correcting work plan according to new ideas

First phase[edit]

Week 1: Collecting data, preprocessing

Week 2: Experiments on small datasets with existing evaluation pairs (compare existing bidix with artificially created via graph)

Week 3: Error analysis and improvement ideas

Week 4: Improving code, preliminary running on medium data, first phase results, correcting plans

Second phase[edit]

Week 5: Optimization work based on medium-data experience

Week 6: Evaluating and improving metrics (experiments), estimate optimization

Week 7: Running on big data

Week 8: Finding errors and possible optimization, pre-results

Third phase[edit]

Week 9: Stable version on existing pairs, preprocessing of in-work pairs, experiments

Week 10: Final version of model, do the actual dictionary enrichment

Week 11: Evaluate results, estimate how much better dictionaries became

Week 12: Documentation, cleaning up the code

Final evaluation

Non-Summer-of-Code plans you have for the Summer[edit]

GSoC is the only project I have this summer. I have some exams in the end of June.