Talk:Automatically trimming a monodix

Alternate implementation methods

Trimming while reading the XML file might have lower memory usage (who knows, untested), but seems like more work, since pardefs are read and turned into FST's before we get to an "initial" state and then attached at the end of regular entries.

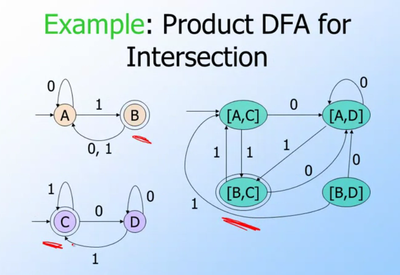

The product automaton for intersection, marks as final only state-pairs where both parts of the state-pair are final in the original automata. Minimisation removes unreachable state-pairs. However, this quickly blows up in memory usage since it creates all possible state pairs first (cartesian product), not just the useful ones.

https://github.com/unhammer/lttoolbox/branches has some experiments, see e.g. branches product-intersection and df-intersection (the latter is the currently used implementation)

Compounds vs trimming in HFST

The sme.lexc can't be trimmed using the simple HFST trick, due to compounds.

Say you have cake n sg, cake n pl, beer n pl and beer n sg in monodix, while bidix has beer n and wine n. The HFST method without compounding is to intersect (cake|beer) n (sg|pl) with (beer|wine) n .* to get beer n (sg|pl).

But HFST represents compounding as a transition from the end of the singular noun to the beginning of the (noun) transducer, so a compounding HFST actually looks like

- ((cake|beer) n sg)*(cake|beer) n (sg|pl)

The intersection of this with

- (beer|wine) n .*

is

- (beer n sg)*(cake|beer) n (sg|pl) | beer n pl

when it should have been

- (beer n sg)*(beer n (sg|pl)

Lttoolbox doesn't represent compounding by extra circular transitions, but instead by a special restart symbol interpreted while analysing.

lt-trim is able to understand compounds by simply skipping the compund tags