Apertium going SOA

This page is out of date as a result of the migration to GitHub. Please update this page with new documentation and remove this warning. If you are unsure how to proceed, please contact the GitHub migration team.

NOTE: After Apertium's migration to GitHub, this tool is read-only on the SourceForge repository and does not exist on GitHub. If you are interested in migrating this tool to GitHub, see Migrating tools to GitHub.

UPDATE: This project has actually moved from branches/gsoc2009/deadbeef/ to trunk/, and its sources are available here: https://apertium.svn.sourceforge.net/svnroot/apertium/trunk/apertium-service/

The source can be easily browsed here: http://apertium.svn.sourceforge.net/viewvc/apertium/trunk/apertium-service/

A quick web interface to a working prototype of the service is available here: http://www.neuralnoise.com/ApertiumWeb2/

Simple installation instructions are at Apertium-service.

Contents

- 1 Introduction

- 2 Service's internals

- 3 Development status - 22/05/2009

- 4 Development status - 01/06/2009

- 5 Development status - 04/06/2009

- 6 Small and Quick Benchmark - 06/06/2009

- 7 Development status - 13/06/2009

- 8 Accessing and Using the service - 15/06/2009

- 9 Development status - 16/06/2009

- 10 Development status - 17/06/2009

- 11 Development status - 18/06/2009

- 12 Development status - 24/06/2009

- 13 Development status - 06/07/2009

Introduction

The aim of this project is to design and implement an "Apertium Service" that can be easy integrated into IT systems implemented using a model based on a Service-Oriented Architecture (SOA).

Actually, to translate a big corpus of document, many Apertium processes are created and each one must load the required transducers, grammars etc., causing a waste of resources and, so, a reduction of scalability.

To solve this problem, a solution is to implement an Apertium Service that doesn't need to reload all the resources for every translation task. In addition, this kind of service would be able to handle multiple request at the same time (useful, for example, in a Web 2.0-oriented enviroment), would improve scalability, and could be easily included into existing business processes and integrated in existing IT infrastructures with the minimum effort.

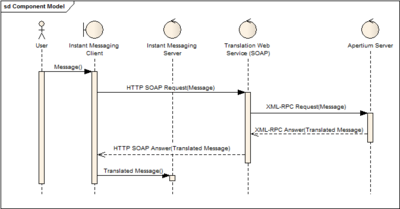

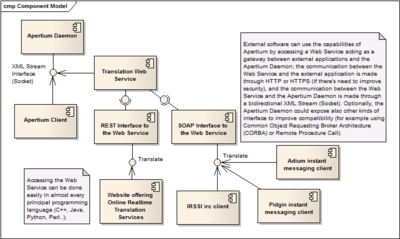

In addition, this project aims also to implement a Web Service acting as a gateway between the Apertium Service and external applications (loading Apertium inside the Web Service itself would be nonsense, since a Web Service is stateless and it wouldn't solve the scalability problem): the Web Service will offer both a SOAP and a REST interface, to make it easier for external applications/services (for example: IM clients, web sites, large IT business processes..) to include translation capabilities without importing the entire Apertium application.

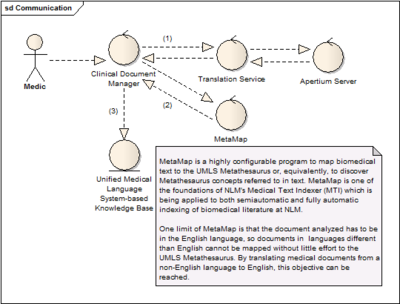

A possible Use Case: an Healthcare organisation

In Healthcare Information Systems (HIS), to improve external services' access and integration, there's a general trend to implement IT infrastructure based on a SOA model; in this use case, I show how an Healthcare Organisation of non English speaking countries can greatly benefit of the integration of a Translation Service implemented using Apertium in their IT infrastructure.

MetaMap is an online application that allows mapping text to UMLS Metathesaurus concepts, which is very useful interoperability among different languages and systems within the biomedical domain. MetaMap Transfer (MMTx) is a Java program that makes MetaMap available to biomedical researchers. Currently MetaMap only works effectively on text written in the English language, which difficult the use of UMLS Metathesaurus to extract concepts from non-English biomedical texts.

A possible solution to this problem is to translate the non-English biomedical texts into English, so MetaMap (and similar Text Mining tools) can effectively work on it.

Service's internals

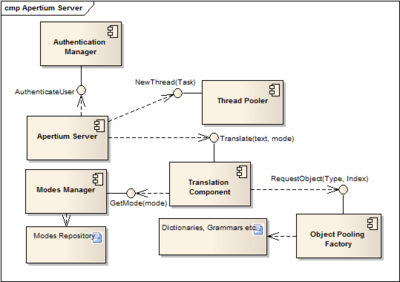

A possible efficient and scalable implementation of an Apertium Server can be composed by the following components:

- Authentication Manager: it's needed to authenticate users (using basic HTTP authentication) and it can be interfaced, for example, to an external OpenLDAP server, a DBMS containing users' informations, etc.

- Thread Pooler: an implementation of the Thread Pool pattern: http://en.wikipedia.org/wiki/Thread_pool

- Modes Manager: locates modefiles and parses them in the corresponding sets of instructions

- Object Pooling Factory: an implementation of the Object Pool pattern: http://en.wikipedia.org/wiki/Object_pool

- Translating Component: using the instructions contained in a mode file (parsed by the Modes Manager component), translates a given text encoded in a certain language into another.

Service's Interface

A possible interface for the Apertium service's functionalities is based on XML-RPC, a remote procedure call protocol which uses XML to encode its calls and HTTP as a transport mechanism. The XML-RPC standard is described in detail here: http://www.xmlrpc.com/spec

The list of methods the Apertium RPC service will expose will be probably similar to the following:

- array<string> GetAvailableModes();

- string Translate(string Message, string modeName, string inputEncoding);

TODO: add methods to get Server's current capabilities and load; this is useful to implement some kind of load balancing in the case of a cluster of Apertium Servers

Development status - 22/05/2009

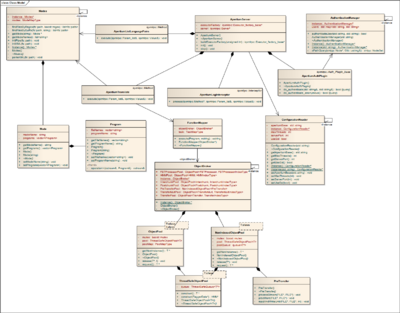

At present, a working prototype of the Apertium Service has been implemented; it reflects the attached UML diagram (click to enlarge);

The project is composed by the following classes/components:

- ApertiumServer: its role is to keep the service running and handle requests (thread pooling, handling priorities etc.); it also maps invoked methods on the classes handling them (translate to ApertiumTranslate and listLanguagePairs to ApertiumListLanguagePairs).

- ApertiumTranslate: handles the translation process; it recreates the Apertium pipeline (including formatting) using the informations contained into mode files, feeds it with user-provided input, and returns the translated message.

- ApertiumListLanguagePairs: returns a list of available modes.

- Modes: handles mode files parsing (both from .mode and modes.xml).

- FunctionMapper: its main role is to execute a specific task of the Apertium pipeline using a specific command contained into a mode.

- ObjectBroker: it's an implementation of the Object Pooling pattern: handles objects' creation and recycling.

At this moment, a working version of the Apertium XML-RPC service is available at http://www.neuralnoise.com:1234/ApertiumServer and it can be easily tested through a simple AJAX interface available at http://www.neuralnoise.com/ApertiumWeb2/

Sample usage: accessing the service from Python

pasquale@dell:~$ python

Python 2.5.4 (r254:67916, Feb 17 2009, 20:16:45)

[GCC 4.3.3] on linux2

Type "help", "copyright", "credits" or "license" for more information.

>>> import xmlrpclib

>>> proxy = xmlrpclib.ServerProxy("http://xixona.dlsi.ua.es:8080/RPC2")

>>> proxy.translate("Hello world", "en", "es")

'Hola Mundo'

>>> proxy.listLanguagePairs()

['en-eo', 'es-en', 'en-es']

CURRENT ISSUE I'M GOING TO SOLVE ASAP: actually, LTToolBox and Apertium's {de, re}formatters are using GNU Flex to generate a lexical scanner; the deployed version of the Apertium Service is using Flex too, but in C++ mode, since I needed to have multiple concurrent (i.e. thread safe) lexical scanners running at the same time. The problem is that Flex, when used in C++ mode, doesn't seem to handle wide chars properly, so I'm temporairly switching (until I find a proper solution) to Boost::Spirit, a lexical scanner and parser generator that is already in service's dependencies (it's currently part of the Boost libraries, a collection of peer-reviewed, open source libraries that extend the functionality of C++).

Development status - 01/06/2009

The charset issue stated before has just been fixed; now, both the input and the output are in the UTF-8 encoding format:

pasquale@dell:~$ python

Python 2.5.4 (r254:67916, Feb 17 2009, 20:16:45)

[GCC 4.3.3] on linux2

Type "help", "copyright", "credits" or "license" for more information.

>>> import xmlrpclib

>>> proxy = xmlrpclib.ServerProxy("http://xixona.dlsi.ua.es:8080/RPC2")

>>> proxy.translate("How are you?", "en", "es")

u'C\xf3mo te es?'

>>> print proxy.translate("How are you?", "en-es")

Cómo te es?

>>>

The new working prototype of the service can be tested here: http://www.neuralnoise.com/ApertiumWeb2/

Current work is focusing on handling Constraint Grammars, used by some language pairs and not included in lttoolbox or apertium.

Development status - 04/06/2009

Now the Apertium Service is able, given a sentence, to identify its language; this is done using an algorithm described in the following article:

N-Gram-Based Text Categorisation (1994), by William B. Cavnar, John M. Trenkle

In Proceedings of SDAIR-94, 3rd Annual Symposium on Document Analysis and Information Retrieval

http://www.info.unicaen.fr/~giguet/classif/cavnar_trenkle_ngram.ps

Sample usage:

pasquale@dell:~$ python

Python 2.5.4 (r254:67916, Feb 17 2009, 20:16:45)

[GCC 4.3.3] on linux2

Type "help", "copyright", "credits" or "license" for more information.

>>> import xmlrpclib

>>> proxy = xmlrpclib.ServerProxy("http://localhost:1234/ApertiumServer")

>>> proxy.classify("Voli di Stato, Berlusconi indagato")

'[it]'

>>>

Small and Quick Benchmark - 06/06/2009

I made the following Python script to quickly benchmark the project's current, still-to-be-optimized, prototype:

#!/usr/bin/python

import time

import xmlrpclib

def timing(func):

def wrapper(*arg):

t1 = time.time()

res = func(*arg)

t2 = time.time()

print '%s took %0.3f ms' % (func.func_name, (t2-t1)*1000.0)

return res

return wrapper

@timing

def bench():

proxy = xmlrpclib.ServerProxy("http://localhost:1234/ApertiumServer")

for i in range(1, 1000):

proxy.translate("This is a test for the machine translation program", "en-es")

if __name__ == "__main__":

bench()

It executes 1000 translation requests to the service, and returns the time it took to complete that task; here's the output:

pasquale@dell:~/gsoc/apertium-service/bench$ ./bench.py bench took 39365.654 ms

Resulting in ~40 ms to complete each request; BTW actually this protype lacks of some optimisation that haven't still been introduced to make the development process easier: by introducing those optimisations, I think we can easily get to ~20-25 ms per request. In addition, those requests have been executed in sequence, without taking advantage of this service's multithreading capabilities.

Development status - 13/06/2009

Support for Constraint Grammars has just beed implemented, integrated and tested; in addition, all the language pairs available in the trunk/ directory of the SVN repository can be tested through http://www.neuralnoise.com/ApertiumWeb2/ (including those implemented using Constraint Grammars).

Now the development is focusing on:

- Make the service's interface compatible with Google Translator APIs';

- Implement a fully functional CORBA interface for the service (as an alternative to the XML-RPC one);

Accessing and Using the service - 15/06/2009

I didn't test all of those samples, anyway if there's something buggy it will be fixed ASAP :) (more languages to come)

Ruby

#!/usr/bin/ruby

require 'xmlrpc/client'

server = XMLRPC::Client.new("xixona.dlsi.ua.es", "/RPC2", 8080)

puts server.call("translate", "This is a test for the machine translation program.", "en", "es")

Python

#!/usr/bin/python

import xmlrpclib

proxy = xmlrpclib.ServerProxy("http://xixona.dlsi.ua.es:8080/RPC2")

print proxy.translate("This is a test for the machine translation program.", "en", "es")

Java

import java.net.URL;

import org.apache.xmlrpc.XmlRpcException;

import org.apache.xmlrpc.client.XmlRpcClient;

import org.apache.xmlrpc.client.XmlRpcClientConfigImpl;

import org.apache.xmlrpc.client.XmlRpcSunHttpTransportFactory;

..

XmlRpcClientConfigImpl config = new XmlRpcClientConfigImpl();

config.setServerURL(new URL("http://xixona.dlsi.ua.es:8080/RPC2"));

XmlRpcClient client = new XmlRpcClient();

client.setTransportFactory(new XmlRpcSunHttpTransportFactory(client));

client.setConfig(config);

client.execute("translate", new Object[] { "This is a test for the machine translation program.", "en", "es" });

Perl

#!/usr/bin/perl

require RPC::XML;

require RPC::XML::Client;

my $client = RPC::XML::Client->new("http://xixona.dlsi.ua.es:8080/RPC2");

my $res = $client->send_request("translate", "This is a test for the machine translation program.", "en", "es");

binmode(STDOUT, ":utf8");

print $res->value . "\n";

Development status - 16/06/2009

Now the apertium-service project has a build system based on GNU build tools; using it is quite easy:

pasquale@dell:~/gsoc/apertium-service$ ./autogen.sh --prefix=/usr/local/ - aclocal. - autoconf. - autoheader. - automake. checking for a BSD-compatible install... /usr/bin/install -c checking whether build environment is sane... yes checking for a thread-safe mkdir -p... /bin/mkdir -p checking for gawk... gawk checking whether make sets $(MAKE)... yes checking for g++... g++ checking for C++ compiler default output file name... a.out checking whether the C++ compiler works... yes checking whether we are cross compiling... no checking for suffix of executables... checking for suffix of object files... o checking whether we are using the GNU C++ compiler... yes checking whether g++ accepts -g... yes checking for style of include used by make... GNU checking dependency style of g++... gcc3 checking dependency style of g++... (cached) gcc3 checking for gcc... gcc checking whether we are using the GNU C compiler... yes checking whether gcc accepts -g... yes checking for gcc option to accept ISO C89... none needed checking dependency style of gcc... gcc3 checking dependency style of gcc... (cached) gcc3 checking how to run the C preprocessor... gcc -E checking for a BSD-compatible install... /usr/bin/install -c checking whether make sets $(MAKE)... (cached) yes checking for boostlib >= 1.37.0... yes checking whether the Boost::Thread library is available... yes checking for main in -lboost_thread... yes checking whether the Boost::Filesystem library is available... yes checking for main in -lboost_filesystem... yes checking whether the Boost::Program_Options library is available... yes checking for main in -lboost_program_options... yes checking whether the Boost::Regex library is available... yes checking for main in -lboost_regex... yes checking for libiconv_open in -liconv... yes libtextcat 2.1 or greater found, text language guessing support being built... checking for pkg-config... /usr/bin/pkg-config checking pkg-config is at least version 0.9.0... yes checking for XMLPP... yes checking for LTTOOLBOX... yes checking for APERTIUM... yes checking for LIBIQXMLRPC... yes .. checking for working mmap... yes checking for open_memstream... yes checking for open_wmemstream... yes configure: creating ./config.status config.status: creating Makefile config.status: creating src/Makefile config.status: creating src/config.h config.status: executing depfiles commands pasquale@dell:~/gsoc/apertium-service$ make Making all in src make[1]: Entering directory `/home/pasquale/gsoc/apertium-service/src' make all-am make[2]: Entering directory `/home/pasquale/gsoc/apertium-service/src' g++ -DHAVE_CONFIG_H -I. -I/usr/include/libxml++-2.6 -I/usr/lib/libxml++-2.6/include -I/usr/include/libxml2 -I/usr/include/glibmm-2.4 -I/usr/lib/glibmm- 2.4/include -I/usr/include/sigc++-2.0 -I/usr/lib/sigc++-2.0/include -I/usr/include/glib-2.0 -I/usr/lib/glib-2.0/include -I/usr/local/include /lttoolbox-3.1 -I/usr/local/lib/lttoolbox-3.1/include -I/usr/include/libxml2 -I/usr/local/include/apertium-3.1 -I/usr/local/lib/apertium-3.1/include -I/usr/local/include/lttoolbox-3.1 -I/usr/local/lib/lttoolbox-3.1/include -I/usr/include/libxml2 -I/usr/local/include/ -I/usr/include/libxml++-1.0 -I/usr /lib/libxml++-1.0/include -I/usr/include/libxml2 -Wno-deprecated -D_REENTRANT -I/usr/include -MT ApertiumServer.o -MD -MP -MF .deps/ApertiumServer.Tpo -c -o ApertiumServer.o `test -f './ApertiumServer.cpp' || echo './'`./ApertiumServer.cpp mv -f .deps/ApertiumServer.Tpo .deps/ApertiumServer.Po .. g++ -DHAVE_CONFIG_H -I. -I/usr/include/libxml++-2.6 -I/usr/lib/libxml++-2.6/include -I/usr/include/libxml2 -I/usr/include/glibmm-2.4 -I/usr/lib/glibmm- 2.4/include -I/usr/include/sigc++-2.0 -I/usr/lib/sigc++-2.0/include -I/usr/include/glib-2.0 -I/usr/lib/glib-2.0/include -I/usr/local/include /lttoolbox-3.1 -I/usr/local/lib/lttoolbox-3.1/include -I/usr/include/libxml2 -I/usr/local/include/apertium-3.1 -I/usr/local/lib/apertium-3.1/include -I/usr/local/include/lttoolbox-3.1 -I/usr/local/lib/lttoolbox-3.1/include -I/usr/include/libxml2 -I/usr/local/include/ -I/usr/include/libxml++-1.0 -I/usr /lib/libxml++-1.0/include -I/usr/include/libxml2 -Wno-deprecated -D_REENTRANT -I/usr/include -MT main.o -MD -MP -MF .deps/main.Tpo -c -o main.o `test -f './main.cpp' || echo './'`./main.cpp mv -f .deps/main.Tpo .deps/main.Po g++ -Wno-deprecated -D_REENTRANT -I/usr/include -o apertium_service ApertiumServer.o Encoding.o ApertiumListLanguagePairs.o ApertiumClassify.o PreTransfer.o Modes.o FunctionMapper.o ObjectBroker.o TextClassifier.o ApertiumLogInterceptor.o ApertiumAuthPlugin.o ApertiumTest.o ApertiumTranslate.o ApertiumRuntimeException.o Rule.o ApertiumApplicator.o Recycler.o Window.o GrammarApplicator_runContextualTest.o uextras.o Cohort.o GrammarApplicator_reflow.o CompositeTag.o Strings.o GrammarApplicator_matchSet.o TextualParser.o BinaryGrammar_write.o GrammarApplicator_runGrammar.o Reading.o Grammar.o BinaryGrammar.o Set.o ContextualTest.o Tag.o icu_uoptions.o GrammarWriter.o Anchor.o GrammarApplicator_runRules.o GrammarApplicator.o SingleWindow.o BinaryGrammar_read.o AuthenticationManager.o ConfigurationManager.o Logger.o main.o -lxml++-2.6 -lxml2 -lglibmm-2.4 -lgobject-2.0 -lsigc-2.0 -lglib-2.0 -L/usr/local/lib -llttoolbox3 -lxml2 -L/usr/local/lib -lapertium3 -llttoolbox3 -lxml2 -lpcre -L/usr/local/lib -liqxmlrpc-server -lboost_thread-mt -lssl -lcrypto -lxml++-1.0 -lxml2 -lboost_thread -lboost_filesystem -lboost_program_options -lboost_regex -ltextcat -lm -L/usr/lib -licui18n -licuuc -licudata -lm -licuio -liconv ApertiumTranslate.o: In function `Deformat::printBuffer()': ApertiumTranslate.cpp:(.text._ZN8Deformat11printBufferEv[Deformat::printBuffer()]+0x66): warning: the use of `tmpnam' is dangerous, better use `mkstemp' make[2]: Leaving directory `/home/pasquale/gsoc/apertium-service/src' make[1]: Leaving directory `/home/pasquale/gsoc/apertium-service/src' make[1]: Entering directory `/home/pasquale/gsoc/apertium-service' make[1]: Nothing to be done for `all-am'. make[1]: Leaving directory `/home/pasquale/gsoc/apertium-service' pasquale@dell:~/gsoc/apertium-service$ ./src/apertium_service Starting server on port: 1234

Development status - 17/06/2009

I just started implementing some optimisations, like the Object Pooling pattern for {de, re}formatters too (so that regexes don't have to be recompiled each time), and actually I've lowered the time required for each translation from ~40ms to less than 5ms :)

Now I should clean up the code (now it's very hacky) and put everything in trunk/ (actually all those hacks are in branches/gsoc2009/deadbeef/Core/)

In addition, the service's interface has been made more conformant to Google Translate APIs'; here's a (IMHO quite self-explaining) demo:

pasquale@dell:~/gsoc$ python

Python 2.5.4 (r254:67916, Feb 17 2009, 20:16:45)

[GCC 4.3.3] on linux2

Type "help", "copyright", "credits" or "license" for more information.

>>> import xmlrpclib

>>> proxy = xmlrpclib.ServerProxy("http://localhost:1234/ApertiumServer")

>>> proxy.languagePairs()

[{'destLang': 'en', 'srcLang': 'bn'}, {'destLang': 'fr', 'srcLang': 'br'}, {'destLang': 'en', 'srcLang': 'ca'}, {'destLang': 'en-multi', 'srcLang'

'ca'}, {'destLang': 'eo', 'srcLang': 'ca'}, .. {'destLang': 'es-ca', 'srcLang': 'val'}]

>>> proxy.translate("Richmond Bridge is a Grade I listed 18th-century stone arch bridge which crosses the River Thames at Richmond, in southwest London,

England, connecting the two halves of the present-day London Borough of Richmond upon Thames.", "en", "es")

{'translation': u'*Richmond El puente es un Grado list\xe9 piedra de 18.\xba siglos puente de arco que cruza el R\xedo *Thames en *Richmond, en Londres

de suroeste, Inglaterra, conectando las dos mitades del Burgo de Londres de d\xeda presente de *Richmond a *Thames.'}

>>> proxy.translate("Richmond Bridge is a Grade I listed 18th-century stone arch bridge which crosses the River Thames at Richmond, in southwest London,

England, connecting the two halves of the present-day London Borough of Richmond upon Thames.", "", "es")

{'detectedSourceLanguage': 'en', 'translation': u'*Richmond El puente es un Grado list\xe9 piedra de 18.\xba siglos puente de arco que cruza el R\xedo

*Thames en *Richmond, en Londres de suroeste, Inglaterra, conectando las dos mitades del Burgo de Londres de d\xeda presente de *Richmond a *Thames.'}

Development status - 18/06/2009

I've propagated the changes to apertium-service's source code, and now the time required to handle each translation request (including the overhead introduced by service-client communication) is about ~7,6 milliseconds:

pasquale@dell:~/gsoc/apertium-service/bench$ ./bench.py bench took 7636.927 ms # time required to answer to 1000 sequential requests

Development status - 24/06/2009

I took some time to clean up the code and fix eventual thread safety issues that may arise when running multiple concurrent translations; actually, there was a small issue in lttoolbox with using multiple instances of the class FSTProcessor in the same program, and I wrote the following patch to fix it:

After having applied this patch to lttoolbox, the service is able to handle an arbitrary number of concurrent translations without any problems.

Some more details about the patch are available here[1].

Development status - 06/07/2009

Hi :D I must admit I took some few days to finish some projects for the university (and lookup some papers about the Lexical Selection problem; I hope I can help to realize something!); I've made a SOAP interface to access the service, you can check the WSDL here:

http://www.neuralnoise.com/ApertiumWeb2/soap.php?wsdl

The next step will be to realize a REST interface to the service; I already realized one using the WSO2 framework, but since it's pretty huge and painful to install, I'm now trying to create something enough small/general/well-designed by myself.