Difference between revisions of "User:Gang Chen/GSoC 2013 Progress"

| Line 16: | Line 16: | ||

<pre> |

<pre> |

||

HMM tagger usage: |

HMM tagger usage: |

||

apertium-tagger -t 8 es.dic es.crp apertium-en-es.es.tsx es-en.prob |

apertium-tagger -t 8 es.dic es.crp apertium-en-es.es.tsx es-en.prob.new |

||

apertium-tagger -g es-en.prob.new |

apertium-tagger -g es-en.prob.new |

||

SW tagger usage: |

SW tagger usage: |

||

apertium-tagger -w -t 8 es.dic es.crp apertium-en-es.es.tsx es-en.prob |

apertium-tagger -w -t 8 es.dic es.crp apertium-en-es.es.tsx es-en.prob.new |

||

apertium-tagger -w -g es-en.prob.new |

apertium-tagger -w -g es-en.prob.new |

||

</pre> |

</pre> |

||

Revision as of 10:28, 9 September 2013

Contents

GSOC 2013

I'm working with Apertium for the GSoC 2013, on the project "Sliding Window Part of Speech Tagger for Apertium".

my proposal is here: Proposal

SVN repo

1. the tagger https://svn.code.sf.net/p/apertium/svn/branches/apertium-swpost/apertium

2. en-es language pair(for experiment) https://svn.code.sf.net/p/apertium/svn/branches/apertium-swpost/apertium-en-es

3. es-ca language pair(for experiment) https://svn.code.sf.net/p/apertium/svn/branches/apertium-swpost/apertium-es-ca

Usage

Command line

HMM tagger usage: apertium-tagger -t 8 es.dic es.crp apertium-en-es.es.tsx es-en.prob.new apertium-tagger -g es-en.prob.new SW tagger usage: apertium-tagger -w -t 8 es.dic es.crp apertium-en-es.es.tsx es-en.prob.new apertium-tagger -w -g es-en.prob.new

Tagging Experiments

1. run the whole training and evaluation pipeline

To execute a whole training and evaluation procedure, please refer to these 2 scripts:

apertium-en-es/es_step1_preporcess.sh

apertium-en-es/es_step2_train_tag_eval.sh

2. evaluation scripts

In the apertium-swpost/apertium-xx-yy (e.g. apertium-en-es) package, we have 2 tiny scripts, that do the evaluation, and are called by es_step2_train_tag_eval.sh:

apertium-en-es/es-tagger-data/extract_word_pos.py

apertium-en-es/es-tagger-data/eval.py

3. Experimetal Results

3.1 Language Pairs

| language | svn | training | test-set |

|---|---|---|---|

| es | apertium-swpost/apertium-en-es | Europarl Spanish | 1000+ lines, hand-tagged |

| ca | apertium-swpost/apertium-es-ca | Catalan Wikipedia | 1000+ lines, hand-tagged |

| en | apertium-swpost/apertium-en-es | Europarl Spanish | 1000+ lines, 80% automatically mapped from the TnT tagger, 20% by hand-tagging |

3.2 System-precision

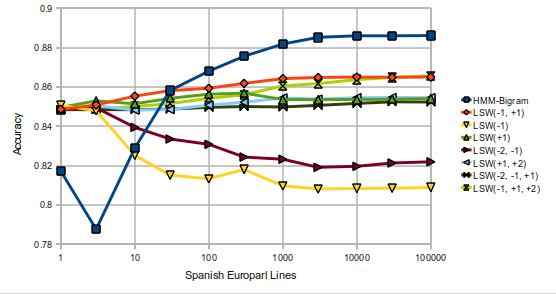

Spanish Euroarl Lines HMM_Bigram LSW_L1R1 LSW_L1 LSW_R1 LSW_L12 LSW_R12 LSW_L12R1 LSW_L1R12 1 0.8171 0.8486 0.8506 0.8496 0.8485 0.8484 0.8484 0.8484 3 0.7876 0.8508 0.8479 0.8529 0.8494 0.8504 0.8484 0.8487 10 0.8289 0.8553 0.8252 0.8517 0.8394 0.8484 0.8484 0.85 30 0.8582 0.8574 0.8153 0.8549 0.8331 0.849 0.8487 0.8515 100 0.868 0.8593 0.8134 0.8573 0.8302 0.8515 0.8494 0.8542 300 0.8756 0.863 0.8182 0.8578 0.8245 0.8527 0.8494 0.856 1000 0.8818 0.8654 0.81 0.8547 0.8237 0.8549 0.8496 0.8605 3000 0.8852 0.8662 0.8084 0.8546 0.8194 0.8545 0.8503 0.8617 10000 0.886 0.8665 0.8083 0.8548 0.8199 0.8552 0.8512 0.8637 30000 0.886 0.8664 0.8087 0.8549 0.8215 0.8552 0.8522 0.8648 100000 0.8861 0.8664 0.8089 0.8553 0.8212 0.8552 0.852 0.8657 300000 0.8659 1000000 0.8667

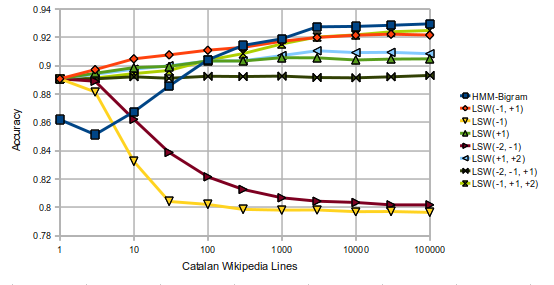

Catalan Wikipedia Lines HMM_Bigram LSW_L1R1 LSW_L1 LSW_R1 LSW_L12 LSW_R12 LSW_L12R1 LSW_L1R12 1 0.8619 0.8907 0.8907 0.8907 0.8907 0.8907 0.8907 0.8907 3 0.8513 0.8969 0.8812 0.8949 0.889 0.8939 0.8907 0.8912 10 0.8674 0.905 0.8256 0.9002 0.8606 0.8975 0.8921 0.8944 30 0.8856 0.9086 0.8048 0.9022 0.8386 0.8999 0.8911 0.8966 100 0.9041 0.9124 0.8018 0.9053 0.8212 0.9033 0.8925 0.9038 300 0.9143 0.9147 0.7989 0.906 0.8123 0.9034 0.8923 0.9085 1000 0.9189 0.9202 0.7985 0.9081 0.8067 0.9071 0.8926 0.9153 3000 0.9274 0.9232 0.7986 0.908 0.8041 0.9105 0.8916 0.9202 10000 0.9277 0.9249 0.7974 0.9065 0.8034 0.9092 0.8914 0.9217 30000 0.9285 0.926 0.7975 0.9072 0.8016 0.9094 0.8921 0.9239 100000 0.9295 0.9254 0.7969 0.9074 0.8015 0.9084 0.8931 0.9248

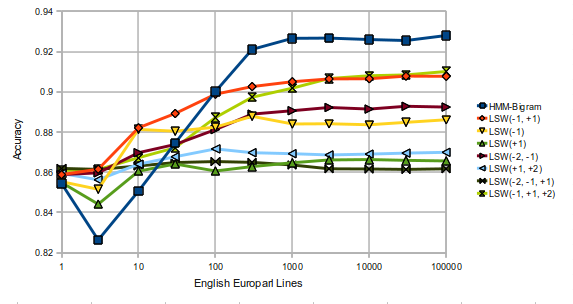

English Europarl Lines HMM_Bigram LSW_L1R1 LSW_L1 LSW_R1 LSW_L12 LSW_R12 LSW_L12R1 LSW_L1R12 1 0.8258 0.8268 0.8234 0.8256 0.8263 0.8266 0.8277 0.8271 3 0.7991 0.8312 0.8329 0.8182 0.8311 0.8244 0.8274 0.8279 10 0.8294 0.852 0.8653 0.8342 0.8441 0.8343 0.829 0.8344 30 0.8494 0.8622 0.8659 0.8475 0.8516 0.8433 0.831 0.8415 100 0.8771 0.8742 0.8679 0.8449 0.8628 0.8498 0.8319 0.8586 300 0.8886 0.8807 0.8741 0.847 0.8732 0.8515 0.8325 0.8717 1000 0.8948 0.8843 0.8711 0.8486 0.8753 0.8524 0.8325 0.8783 3000 0.8942 0.8867 0.8708 0.8499 0.8781 0.8524 0.8328 0.8852 10000 0.8934 0.8874 0.8705 0.8499 0.8774 0.853 0.8323 0.8872 30000 0.8929 0.8886 0.8719 0.8497 0.8787 0.8539 0.8323 0.8888 100000 0.8945 0.8887 0.8736 0.8496 0.8787 0.854 0.8326 0.8902 300000 0.8906 1000000 0.891

3.3 Graph

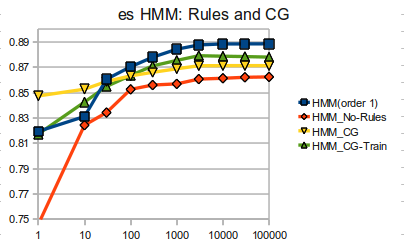

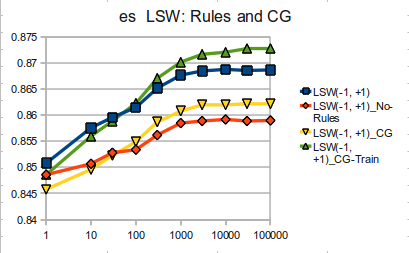

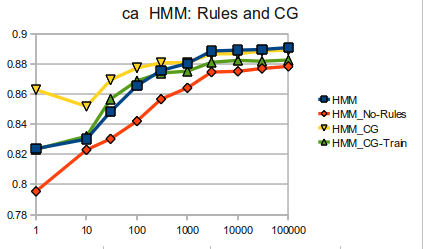

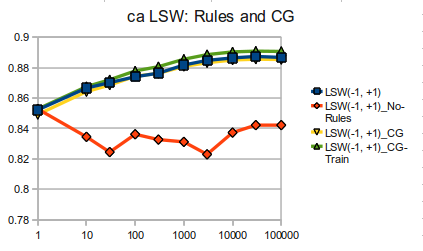

4. With or Without Rules and CG

4.1 data

es: HMM & LSW

Lines HMM HMM_No-Rules HMM_CG HMM_CG-Train | LSW(-1, +1) LSW(-1, +1)_No-Rules LSW(-1, +1)_CG LSW(-1, +1)_CG-Train 1 0.8194 0.7462 0.8473 0.8173 | 0.8508 0.8486 0.8458 0.8486 10 0.8311 0.8243 0.8526 0.8425 | 0.8576 0.8507 0.8496 0.856 30 0.8605 0.8342 0.8587 0.8548 | 0.8596 0.8528 0.8523 0.8589 100 0.8703 0.8525 0.8631 0.8634 | 0.8615 0.8534 0.8549 0.8623 300 0.8778 0.8559 0.8659 0.871 | 0.8652 0.8562 0.8587 0.8671 1000 0.8841 0.8568 0.8687 0.8753 | 0.8677 0.8585 0.8608 0.8702 3000 0.8875 0.8606 0.8711 0.879 | 0.8685 0.8589 0.862 0.8717 10000 0.8883 0.8612 0.8712 0.8786 | 0.8688 0.8592 0.862 0.8721 30000 0.8883 0.862 0.8712 0.8783 | 0.8686 0.8589 0.8622 0.8728 100000 0.8884 0.8623 0.8712 0.8779 | 0.8687 0.859 0.8622 0.8728

ca: HMM & LSW

Lines HMM HMM_No-Rules HMM_CG HMM_CG-Train | LSW(-1, +1) LSW(-1, +1)_No-Rules LSW(-1, +1)_CG LSW(-1, +1)_CG-Train 1 0.8237 0.7954 0.863 0.8234 | 0.8521 0.8528 0.8492 0.8528 10 0.8301 0.823 0.8519 0.832 | 0.8665 0.8345 0.8642 0.8675 30 0.8484 0.8303 0.8694 0.8568 | 0.8701 0.8245 0.8685 0.872 100 0.8656 0.8421 0.8775 0.8689 | 0.8739 0.8362 0.8743 0.8778 300 0.8757 0.8567 0.8808 0.8741 | 0.8763 0.8327 0.8762 0.8806 1000 0.8804 0.8642 0.8811 0.8753 | 0.8816 0.8312 0.8808 0.8855 3000 0.8887 0.8747 0.8868 0.8812 | 0.8847 0.823 0.8832 0.8885 10000 0.8892 0.8752 0.8869 0.8825 | 0.8863 0.8373 0.885 0.8903 30000 0.8897 0.8771 0.8887 0.8819 | 0.8873 0.8422 0.8855 0.8908 100000 0.8909 0.8785 0.8893 0.8827 | 0.8866 0.8422 0.8853 0.8906

- Note: HMM_CG-Train means only use CG in the training step (to process training data), but not in the tagging step.

4.2 graph

Week Plan and Progress

| week | date | plans | progress |

|---|---|---|---|

| Week -2 | 06.01-06.08 | Community Bonding | (1) Evaluation scripts (1st version) working for Recall precision and F1-score. (2) SW tagger (1st version) working. |

| Week -1 | 06.09-06.16 | Community Bonding | (1) LSW tagger (1st version) working, without rules. |

| Week 01 | 06.17-06.23 | Implement the unsupervised version of the algorithm. | (1) LSW tagger working, without rules. (2) Check for and fix bugs. |

| Week 02 | 06.24-06.30 | Implement the unsupervised version of the algorithm. | (1) LSW tagger working, with rules. (2) Update evaluation scripts for unknown words. |

| Week 03 | 07.01-07.07 | Store the probability data in a clever way, allowing reading and edition using linguistic knowledge. Test using linguistic edition. | (1) Reconstruct tagger-data using inheritance. (2) Experiment on "How the iteration number affects the tagger performance?"(Conclusion: usually less than 8.) (3) Extract large corpus from Wikipedia for Spanish and Catalan for text amount experiments. |

| Week 04 | 07.08-07.14 | Make tests, check for bugs, and documentation. | (1) Refine LSW tagger code for efficiency. (2) Experiment on "How the text amount affects the tagger performance?" (Conclusion: usually 1000+ lines will be OK) (3) Make stability tests. |

| Deliverable #1 | A SWPoST that works with probabilities. | ||

| Week 05 | 07.15-07.21 | Implement FORBID restrictions, using LSW. Using the same options and TSX file as the HMM tagger. | (1) Refine LSW tagger code for efficiency. (2) Implement LSW tagger with different window sizes for Catalan and Spanish and experiment them with different amounts of text. (Conclusion: window -1,+1 works best of all). |

| Week 06 | 07.22-07.28 | Implement FORBID restrictions, using LSW. Using the same options and TSX file as the HMM tagger. | (1) Study the TnT tagger for preparing English tagged text. (2) Map tags between TnT tagger and LSW tagger. 6000 ambigous words were automatically mapped out of the total 8000. |

| Week 07 | 07.29-08.04 | Implement ENFORCE restrictions, using LSW. Using the same options and TSX file as the HMM tagger. | (1) Hand-tag the 2000 ambiguous words that were not mapped automatically. |

| Week 08 | 08.05-08.11 | Make tests, check for bugs, and documentation. | (1) Experiment different window settings and text amounts on English. (Conclusion: performance all increase, unlike Spanish and Catalan, which decrease under some settings.) (2) Experiment to randomize training text for Spanish. (Conclusion: behave very alike.) (3) Further study window -1,+1,+2. (very little improvement at the cost of increasing many parameters and memory usage.) |

| Deliverable #2 | A SWPoST that works with FORBID and ENFORCE restrictions. | ||

| Week 09 | 08.12-08.18 | Implement the minimized FST version. | (1) Experiment HMM and LSw tagger without rules (2) Learn about CG. |

| Week 10 | 08.19-08.25 | Refine code. Optionally, implement the supervised version of the algorithm. | (1) Experiment HMM and LSw tagger without rules (2) Experiment HMM and LSW tagger with CG. |

| Week 11 | 08.26-09.01 | Make tests, check the code and documentation. Optionally, Study further possible improvements. | (1) re-ran the experiments to check code and data. (2) Write the report, 1st version. |

| Week 12 | 09.02-09.08 | Make tests, check the code and documentation. | |

| Deliverable #3 | A full implementation of the SWPoST in Apertium. |

General Progress

2013-08-27: Experiment using CG with LSW tagger.

2013-08-20: Experiment HMM and LSW tagger without rules.

2013-08-09: Experiment different window settings and text amounts on English.

2013-08-01: Hand-tag the English words that were not automatically mapped from the TnT tagger.

2013-07-26: Map tags between TnT tagger and LSW tagger.

2013-07-17: Refine LSW tagger code for efficiency.

2013-07-06: Reconstruct tagger data using inheritance. Delete the intermedia SW tagger implementation.

2013-06-28: LSW tagger working, with rules.

2013-06-20: LSW tagger working, without rules.

2013-06-11: SW tagger working. Evaluation scripts working.

2013-05-30: Start.

Detailed progress

2013-09-03

1. Finished the report, 1st version

2013-08-27

1. Finished experimenting HMM and LSW tagger without using rules.

2. Managed to use CG together with LSW tagger.

2013-08-09

1. Finished hand-tagging the English test set.

2. Experiment different window settings, and text amount on English.

3. Experiment to randomize training text for Spanish.

4. Further experiment the -1,+1,+2 window.

2013-08-01

1. Check the algorithm and analyse cases

2. Hand-tag the rest 20% ambiguous words that were not mapped automatically.

2013-07-26

1. Study the usage of the TnT tagger.

2. Study the relationship between tagset of the TnT tagger and that of the Apertium tagger.

3. Develop algorithms to do automatic mapping between the two tagsets, 80% of the ambiguous words are successfully mapped.

2013-07-15

1. Follow the training and tagging procedure step by step, using a small corpus of several sentences, so that parameters can be calculated by hand.

2. No significant bugs found during the training and tagging procedure.

2013-07-10

1. Managed to get the HMM-supervised running.

2. Do experiments with window +1+2, -1+1+2. The results show that the RIGHT contexts are more important than the left contexts.

2013-07-06

1. Reconstruct 'tagger_data' class, using INHERITANCE for HMM and LSW respectively.

2. Make relevant changes to the surroundings, including tagger, tsx_reader, hmm, lswpost, filter-ambiguity-class, apply-new-rules, read-words, etc.

3. DELETE the swpost tagger, which serves as an intermedia implementaion, replaced by the final lsw tagger, which works fine and could support rules.

2013-07-03

1. update evaluation scripts, putting the unknown words into consideration. Now the evaluation script can report Recall, Precision, and F1-Score.

2. experiment with different amounts of text for training the LSW tagger.

3. experiment with different window sizes for training the LSW tagger.

4. experiments show strange results.

5. checking implementation, in case of potential bugs.

2013-06-28

1. add find_similar_ambiguity_class for the tagging procedure. So a new ambiguity class won't crash the tagger down.

2. bugfix to the normalize factor. This makes the things right, but no improvements are gained to the quality.

3. replace the SW tagger with the LSW tagger. So the "-w" option is owned by the LSW tagger.

2013-06-23

1. implement a light sliding-window tagger. This tagger is based on the SW tagger, with "parameter reduction" described in the 2005 paper.

2. add rule support for light-sw tagger. The rules help to improve the tagging quality.

2013-06-21

1. add ZERO define. Because there are some double comparisons, we need a relatively precise threshold.

2. bug fix for the initial procedure and iteration formula.

3. check function style so the new code is consistent to the existing code.

2013-06-20

1. use heap space for 3-dimensional parameters. This makes it possible to train successfully without manually setting the 'stack' environment.

2. add retrain() function for SW tagger. The logic of the SW tagger's retrain is the same as that of the HMM tagger. It append several iterations based on the current parameters.

3. bugfix, avoid '-nan' parameters.

4. the tagger_data write only non-ZERO values. This saves a lot of disk space, reducing the parameter file from 100M to 200k.

2013-06-19

1. add print_para_matrix() for debugging in SW tagger. This funciton only prints non-ZERO parameters in the 3d matrix.

2. add support for debug, EOS, and null_flush. This makes the tagger work stable when called by other programs.

2013-06-17

1. a deep follow into the morpho_stream class, and make sense its memembers and functions.

2. refine the reading procedure of the SW tagger, so that the procedure is simpler and more stable.

2013-06-13

1. fix a bug in tagging procedure, where the initial tag score should be -1 instead of 0.

2013-06-12

1. add option "-w" for sw tagger. The option "-w" is not a drop-in replacement to the current HMM tagger, but an extension. So the default tagger for Apertium will still be the HMM tagger. If the "-w" option is specified, the the SW tagger will be used.

2. add support for judging the end of morpho_stream.

3. The first working version is OK:)

2013-06-11

1. Fix the bug of last version, mainly because of the read() method in 'TSX reader'. The read and write methods in the tsx_reader and tagger_data are re-implemented, because they are different from those for the HMM.

2. Implement the compression part of the SW tagger probabilities. These parameters are stored in a 3-d array.

3. Doing some debugging on the HMM tagger, in order to see how a tagger should work togethor with the whole pipeline.

2013-06-10

1. Implement a basic version SW tagger. But there are bugs between them.

2. The training and tagging procedures strictly follow the 2004 paper.

2013-05-30

1. Start the project.